Highlights

- Apple Pico-Banana-400K brings 400,000 high-quality image-editing samples for AI research.

- Built using Google’s Gemini-2.5 models for image generation and quality review.

- Released on GitHub under a non-commercial license for global AI researchers.

Apple has released a new research dataset called Apple Pico-Banana-400K , and it’s one of the biggest moves the company has made in AI research lately.

The dataset has 400,000 edited images , each made and reviewed carefully to train AI systems that can edit images based on written text instructions.

Don’t want to miss the best from TechLatest ? Set us as a preferred source in Google Search and make sure you never miss our latest.

This new release helps fix one of the major issues in AI image editing, which is the lack of open, good-quality datasets for research.

What makes it more interesting is that Apple used Google’s Gemini-2.5 models to help generate and filter the data.

It shows how both companies are open to working across research boundaries to improve the future of AI.

Why this dataset matters?

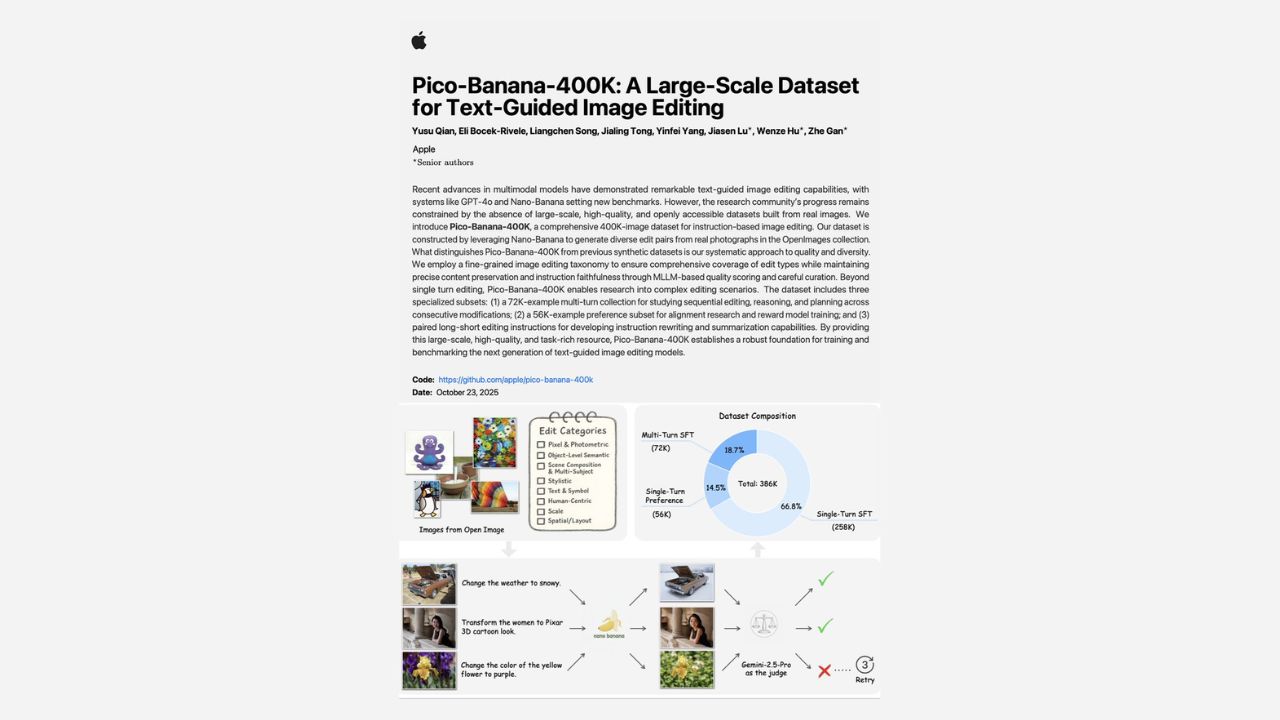

In their paper titled “Pico-Banana-400K: A Large-Scale Dataset for Text-Guided Image Editing,” Apple’s team mentioned that most existing AI editing datasets are either small, not diverse, or locked behind proprietary systems.

Because of this, researchers often find it hard to train or test new models in a consistent way.

The Apple Pico-Banana dataset directly fixes that problem. It’s open for non-commercial use , meaning researchers can freely access it from GitHub , study it, and use it in their AI projects. However, it can’t be used for any business or profit-based purpose.

Image Credits: Apple

How Apple built the Pico-Banana dataset?

Apple’s researchers started by collecting a large set of real images from the OpenImages dataset , which includes people, objects, and scenes with text.

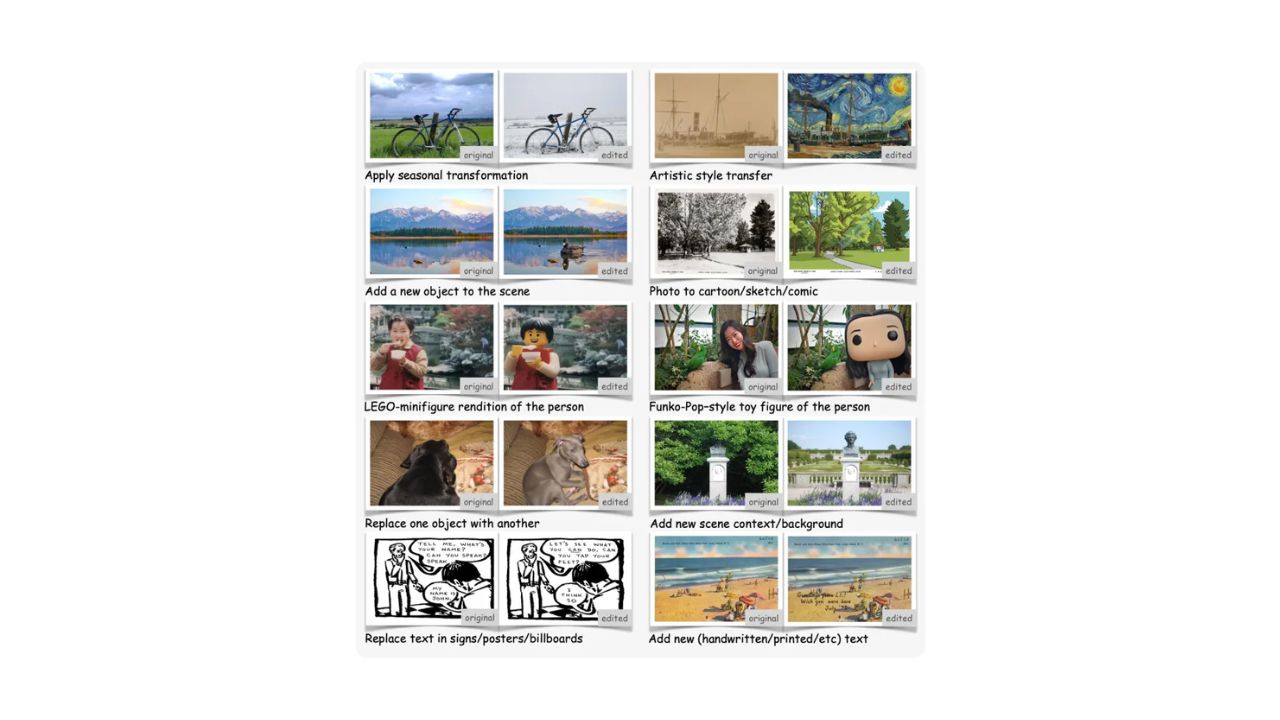

Then they prepared 35 different edit instructions divided into eight main categories. Some edits were simple, like applying a filter, while others were complex, such as turning a person into a cartoon figure or toy-style version.

Image Credits: Apple

To actually create the edited images, Apple used Google’s Nano-Banana (Gemini-2.5-Flash-Image) model.

After that, every generated image was reviewed by another model, Gemini-2.5-Pro , which checked how well the edit matched the prompt and how realistic the image looked.

Only images that passed both checks were added to the final Apple Pico-Banana-400K dataset. This process made sure the dataset maintained strong quality and variety.

Further Reading:

- Apple M5 vs. M4: Key Differences to Help You Choose Better

- Google Vibe Coding Ushers In a New Era of AI Creativity in Gemini Studio

- Microsoft Unveils MAI Image 1, a Game-Changing AI Image Generator

What makes the Apple Pico-Banana dataset unique?

Unlike other datasets that only show one before-and-after image pair, Pico-Banana-400K also includes multi-turn edits , where an image goes through a series of up to five changes. This helps models learn how to follow a longer, step-by-step editing process.

It also includes preference pairs , which compare a good edit against a bad one. This helps AI models learn what to avoid, improving accuracy and reliability in real-world tasks.

Apple admitted that there are still some minor issues, especially with fine details or text inside images, but overall, the dataset is solid.

It’s designed to help build better text-guided image editing systems that understand prompts more clearly and produce cleaner, more natural edits. Researchers can read the full study on arXiv and download the dataset directly from GitHub .

Enjoyed this article?

If TechLatest has helped you, consider supporting us with a one-time tip on Ko-fi. Every contribution keeps our work free and independent.